Whats up whats uppp!

I normally share tools I'm using and news from the AI world. This week I wanted to do something different.

I spent almost a week using, testing, breaking, watching every tutorial and reading every blog post I could find about one feature. So you don't have to.

This one is a full guide on Agent Teams in Claude Code. What it is, how to use it, what to avoid, the whole thing. So expect it to be a bit longer than usual. I just want to make sure this is the only time you have to spend learning how to use it.

Let's get into it.

Agent Teams in Claude Code

This is the biggest update Claude Code has shipped since it launched. And it forced me to change how I work.

I'll be honest.

I'm used to working on one task, one feature at a time.

Build it, test it, make sure it works, move to the next thing. That's how most of us operate. You finish one thing before starting another. It's structured, it's safe and you know where you are.

Last week, Anthropic dropped Agent Teams alongside Claude Opus 4.6. And it completely breaks that pattern.

Here's the idea: instead of one Claude working through your task step by step, you tell it to spin up a team. A lead agent creates teammates, assigns them tasks, and they all work in parallel. Each teammate has its own context window, its own focus, and they talk to each other directly.

This is NOT the same as subagents.

Subagents are like interns reporting back to a manager. They can't talk to each other. Agent Teams is actual collaboration, teammates sharing findings, challenging each other's approaches, coordinating independently. Like a room of specialists working together on the same project.

That means you have to start thinking differently.

Instead of "what's the next step," it becomes "what are all the pieces, and which ones can run at the same time."

Instead of doing things in order, you're designing how to break work apart so 3, 4, 5 agents can tackle it simultaneously.

It took me a few days to get used to it.

The first time I tried, I kept wanting to check on each agent, follow the steps one by one. Old habits.

But once it clicks, once you see 5 things happening at the same time that would've taken you the whole morning, you can't go back.

The insight behind it is simple: LLMs get worse as their context gets bigger. The more stuff you throw into one conversation, the harder it is for the model to focus.

By giving each agent a narrow scope and clean context, you get better thinking from each one.

What you can actually use this for

This is the part most people miss. Agent Teams isn't just for coding. It's for any big task you can break into pieces.

If you're in marketing or sales, think about all the tasks you do that involve multiple steps.

Competitor research where one agent analyzes their positioning, another scrapes their landing pages, another drafts your messaging based on the findings.

Prospecting where one agent researches the company, another finds the right contacts, another writes personalized outreach.

Content where multiple agents research the topic, another pulls data and stats, another writes the draft.

All running at the same time, sharing what they find as they go. You can have 5 agents doing research simultaneously, each covering a different angle, and the lead synthesizes everything into one report.

If you're building software, the use cases are even more obvious.

Code review with 3 reviewers, each focused on a different area (security, performance, tests).

QA testing where 5 agents test your app in parallel. One person got a prioritized bug report in about 3 minutes.

Debugging with "competing hypotheses," 3-5 agents each investigating a different theory, challenging each other, instead of one agent anchoring on the first thing it finds.

Or feature builds where each teammate owns specific files and they all build simultaneously. One developer built an entire email app in 20 minutes without writing a single line of code.

And honestly, it works for any repetitive process that involves multiple steps.

Data cleanup, report generation, audit checklists, onboarding docs. If you can describe the steps, you can split them across agents.

If work can be split into independent pieces, Agent Teams speeds it up.

How to turn it on

It's still experimental, so you need to enable it manually.

Add this to your Claude Code settings.json (or just ask Claude Code to do it for you):

{

"env": {

"CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS": "1"

}

}That's it. Now you can just tell Claude what you want in plain language.

Something like: "Create a team to review this PR. Spawn three reviewers, one focused on security, one on performance, one on test coverage."

Or: "Spawn four teammates. Backend owns src/api/, Frontend owns src/components/, Tests owns tests/, Docs owns docs/. Each one works independently."

Claude handles the rest. It creates the team, assigns the tasks, and the agents start working.

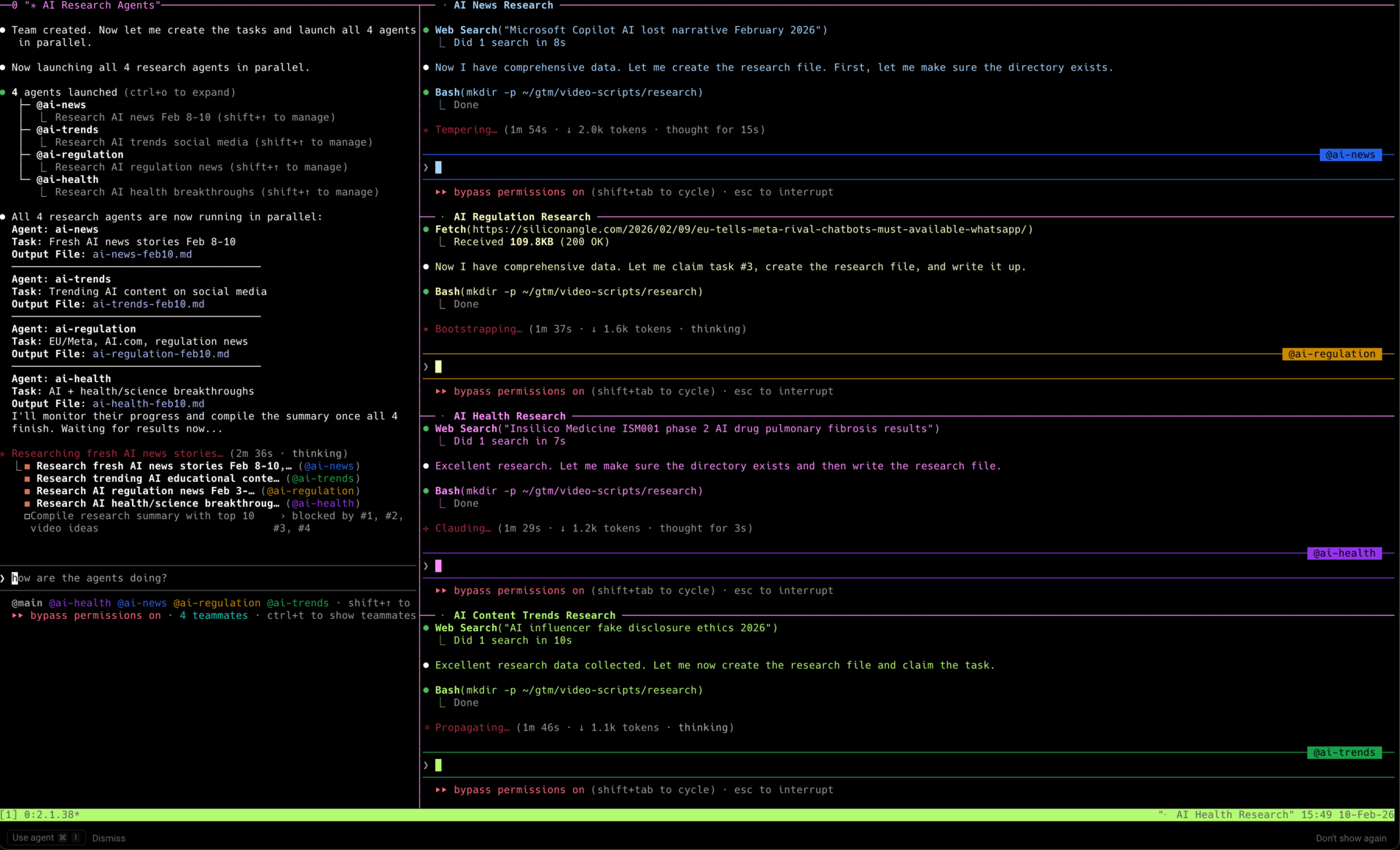

Two ways to watch them work

In-process mode is the default. All teammates run inside your main terminal. You use Shift+Up and Shift+Down to navigate between them. Works in any terminal. The lead agent reports back to you with updates, so you don't necessarily need to see what every teammate is doing.

Split-pane mode gives each teammate its own pane so you can see everyone at once. You just need to install tmux (brew install tmux), it runs inside whatever terminal you already use.

That said, I do recommend it if you're the kind of person who wants to see everything happening in real time. It's not required though, the default mode works fine for most people.

The workflow that actually works

After testing this and reading everything from developers who've been using it, here's the workflow:

Plan first, parallelize second. Start in plan mode. Go back and forth with Claude until you like the plan. This costs about 10,000 tokens. Then spawn the team. A team going in the wrong direction costs 500,000+ tokens. Multiple developers burned through their entire daily token budget because they skipped the planning step.

Use Delegate Mode (Shift+Tab). This is critical. Without it, the lead agent will start coding instead of coordinating. This is the number one complaint I heard. Delegate mode restricts the lead to coordination-only tools: spawning teammates, sending messages, managing tasks. It physically cannot write code. If you're running more than 2 teammates, this isn't optional.

Give detailed spawn prompts. Teammates don't inherit the lead's conversation history. They start blank. If your prompt is "work on the frontend," the teammate wastes its first 50,000 tokens just figuring out what the project is.

Instead, write prompts like you're onboarding a new contractor: what the project is, which files they own, what conventions to follow, what the deliverable is. File paths, not descriptions.

Press Ctrl+T to see the task list. This is your project board. All teammates can see it, claim tasks, mark them complete, and track dependencies.

Things you should do

Start with a code review or QA test as your first team task. No file conflicts, agents work independently, output is a report not code changes. Learn the coordination patterns before doing feature builds.

Keep it to 5-6 tasks per teammate. Too small and coordination overhead dominates. Too big and they go off track before you notice.

Assign clear file ownership to each teammate. There's no file-level locking between agents. If two teammates edit the same file, one will overwrite the other. Be explicit: "Backend owns src/api/notifications/ and migrations. Frontend owns src/components/notifications/."

Use the plan approval feature. Add "require plan approval" in your spawn prompt. Teammates submit their plan before writing any code. You review it, approve or reject. This catches bad assumptions early.

Design test output for agents. "If there are errors, Claude should write ERROR and put the reason on the same line so grep will find it." Parseable errors save tokens.

Maintain good documentation in your repo. When a new teammate spawns into a fresh context, it needs to orient itself without asking 20 questions. The better your READMEs and CLAUDE.md, the less tokens each teammate burns on exploration.

Have agents write progress updates as they work. This isn't just organization, it's a deliberate trick. By writing progress notes, the agent recites its objectives into recent context, which prevents it from drifting off-task during long sessions.

Things you should avoid

Don't need to use Agent Teams for everything. A solo session uses around 200,000 tokens. Three teammates use around 800,000 tokens. Five teammates means at minimum 5x consumption. If it's a simple bug fix or one-file change, use a regular session. Save teams for work that actually benefits from parallelism.

Don't skip plan mode to "save time." 10,000 tokens for a plan vs 500,000+ tokens going in the wrong direction. The math is obvious.

Don't let the lead start coding. Activate delegate mode (Shift+Tab) immediately after spawning your team, not after you notice the lead doing implementation work. By then you've already burned tokens.

Don't use vague spawn prompts. "Review the authentication module" is bad. "Review authentication module at src/auth/ for security vulnerabilities. Focus on token handling, session management, input validation. App uses JWT in httpOnly cookies. Report issues with severity ratings" is better.

Don't broadcast when you can direct message. Broadcasts send a separate message to every teammate. If your message is only relevant to one agent, send it directly. Broadcasts are expensive.

Don't expect the lead to know when everything's done. Task status sometimes lags. Teammates forget to mark tasks complete, dependent tasks stay blocked. If the lead announces it's done, tell it to check all task statuses and verify teammates actually finished.

Don't try to nest teams or have teammates spawn their own teammates. Not supported by design. One team per session.

Don't close tmux panes while agents are running. Auto-closing panes breaks the ability to create another team in that session. You'll get "Session not found" errors that force a full Claude Code restart.

Don't expect /resume to restore your teammates. If you end a session that had a team running, /resume and /rewind will NOT bring back the teammates. The lead will try to message agents that no longer exist. Always start fresh.

Don't dump massive output to agents. If your tests print thousands of lines, log details to files instead and let agents retrieve what they need. Claude can't efficiently process massive output dumps, it just burns context on noise.

Don't start with a 5-agent feature build on day one. Start with 2 teammates on a review task. Then try 3 on a debug session. Scale from there.

The crazy stat

Anthropic published this demo where 16 Claude agents built a C compiler from scratch. 100,000 lines of Rust code. 99% pass rate on the GCC torture test suite. It compiles a bootable Linux kernel on x86, ARM, and RISC-V, plus QEMU, FFmpeg, SQLite, PostgreSQL, Redis, and Doom.

Each agent ran in its own Docker container, picked tasks automatically by writing lock files, resolved Git merge conflicts on its own, and pushed code with zero human supervision.

The researcher who ran it, Nicholas Carlini from Anthropic's Safeguards team, said the experiment both excites him and leaves him "feeling uneasy." He didn't expect this to be possible this early in 2026.

The cost: $20,000 in API tokens over two weeks. 2 billion input tokens, 140 million output tokens.

That's an extreme example. But the fact that it worked at all tells you where this is going.

The shift

Here's what all the developers who've been using this keep saying: the hard part isn't prompting anymore. It's task decomposition. How you break up the work, assign file ownership, and design the right team structure.

The job is becoming less "individual contributor" and more "engineering director." You define what needs to happen, Claude handles the implementation.

Give each agent less to think about, and it thinks better. That's the whole insight.

What’s happening at Kleva

For those of you who are new here. I’m cofounder of Kleva. We build AI collection agents for lending companies in LATAM.

January was our best month. Revenue grew 58% month over month, usage grew 58%, and we had zero churn.

We also shipped something I'm proud of.

Our AI calling agent now detects if it reaches a voicemail or a real person in the first few seconds. If it's a voicemail, we don't charge the client for that call.

That decision actually reduced our short-term revenue. But I know it's the right call. You don't build trust by charging people for calls that never reached anyone.

If you have a lending business and want to see how Kleva works, reply and I'll set up a demo.

We're hiring

All of this, Agent Teams, multi-model workflows, the speed everything is moving, is exactly why we're looking for a prompt engineer at Kleva.

If you're someone who thinks in prompts and agent architecture, who gets excited about designing how AI agents work together, we want to talk to you. Or if you know someone like that, send them our way.

Reply to this email and I'll share the details.

That's it for this week.

If you try Agent Teams, or have thoughts on how this changes things, reply. I read everything.

-Ed

Liked this? Sign up here to get more.