Whats up whats uppp!

You're getting this email because you subscribed to aidstation, the newsletter where I share what I'm using, what I'm learning, and what's happening in AI. If you're new here, welcome.

Let's get into it.

What I've been using

ClawdBot Update: what I learned after two weeks of actually using it

Last week I talked about ClawdBot.

If you missed it, here’s the short version:

It's a 24/7 AI that runs on your own server and talks to you through Telegram, WhatsApp, Slack, or whatever you use. It remembers everything, it's proactive, and it can actually do things, not just answer questions.

If you want the full breakdown and setup guide, check out last week's newsletter.

This week I couldn't stop using it. And I have some takes.

Where it's better than Claude Code

I've been using Claude Code as a personal assistant for months. Calendar, email, Slack, Linear, CRM, all connected through MCPs.

It worked, but keeping context between conversations was the real issue.

Every time you start a new Claude Code session, you're starting from scratch. Unless you had everything perfectly organized in your CLAUDE.md and folder structure, the AI didn't remember what you told it yesterday.

You had to re-explain things, re-load context, set things up again. It felt more like onboarding a new intern every morning than working with an assistant.

ClawdBot makes the assistant part way easier. It's conversational. You tell it something and it just remembers.

There is folder structure and context setup, but ClawdBot takes care of that for you. The more you use it, the more it knows.

This morning I had a bunch of follow-ups sitting in the CRM. I told ClawdBot to pull them, review the previous email threads for each one, and draft a response for each lead.

Then it created a task for me to review each draft before sending.

What would have been an hour of context-switching was done in 15 minutes. I just reviewed, tweaked a couple lines, and hit send.

The Google Sheets hack

This one surprised me.

I connected ClawdBot to a Google service account. Now I can invite that service account to any Google Sheet and ClawdBot can read, write, and update it.

I set up a scorecard for our Q1 L10 meetings with all the metrics I care about. But half of those numbers come from the CRM, some from ads platforms, some from other tools.

Before, I'd spend time pulling numbers from five different places and doing math.

Now ClawdBot goes into each tool, pulls the data, and fills in the sheet. I didn't enter a single number manually. I just said "update the scorecard" and it did it.

If you run any kind of weekly review or reporting, this is the move. Set up the template once, connect the service account, and let ClawdBot do the data entry.

Skills without the setup

If you've been following this newsletter, you know I'm big on skills in Claude Code, custom workflows you can trigger for repetitive tasks.

ClawdBot does this too, but you don't have to configure anything.

Here's an example. Similar to the follow-ups thing, I asked ClawdBot to help me prepare for a couple of meetings. We went back and forth until I had exactly the prep I wanted.

Once we nailed it, I told ClawdBot: from now on, when I ask you to prepare for a meeting, this is what I want.

Now every time I ask, it already knows what to do. On top of that, it's connected to my calendar, so it knows which meetings are coming up. Same workflow, every time, without me having to set up a skill file or write any config.

This makes way more sense to me than setting up skills in Claude Code. The assistant learns from the conversation. You teach it once by doing the thing together, and it remembers.

What I don't love (yet)

For anything heavy, Claude Code is still better. Big documents, development work, anything that needs a lot of context in one go.

The issue I had is context compacting. You have a conversation context limit, and ClawdBot needs to compact the conversation as it grows, keeping the important parts and discarding the rest.

Sometimes it struggles with this.

It would get stuck and I'd have to reset the whole conversation. I've been setting up some workflows, talking to ClawdBot itself, to improve how it handles this.

The cool part is you can have it build on itself, which is great.

But it's still more tedious than Claude Code. And for some tasks I decided to keep using Claude Code, especially when I need to actually look at documents.

It's way easier to read a document on your computer than to have it sent as a file, download it, and go back and forth.

That's actually the other thing.

Most of the platforms you're going to be using your agent through have a character limit. So anything long gets sent as a document file instead of a message.

It works, but it breaks the flow.

Other things I've been using it for

Health tracking, I was using Warp as a health advisor before but ClawdBot handles this way better. (I wrote about this here)

Organizing Notion, keeping pages updated without opening it myself. Like dropping ideas, things I want to read, things I want to listen to, and having it all organized when I need it

Creating tickets in Linear from a quick message

Daily briefing every morning: calendar, pending tasks, important emails

People are finding all kinds of uses.

Someone set it up as a home PM where they drop topics all week and it sends a roundup every Sunday.

Another person connected it to Todoist and had ClawdBot build its own skill for automating tasks, all from within a Telegram chat.

One more thing: Mac Mini vs VPS

If you've seen people talking about ClawdBot online, you've probably noticed a lot of them are buying Mac Minis to run it.

…

If you thought you needed to buy one, you don't. I run mine on a virtual machine. No hardware to plug in, no setup to worry about, and you can spin it up or tear it down whenever you want.

The bottom line

ClawdBot as an assistant: follow-ups, CRM, health tracking, meeting prep, reports, data entry, organizing your stuff.

Claude Code for building, development, documents, and anything that needs deep context.

I'm not replacing one with the other. I'm using both for what they're each best at.

If you set up ClawdBot after last week's newsletter, reply and tell me what you're using it for. I want to steal your workflows.

Browser Use

I've talked about Firecrawl before for scraping and pulling data from the web. It's great for that. But sometimes you need more than just reading a page, you need to actually interact with it. Click buttons, fill out forms, log in, navigate between steps.

Browser Use is an open source tool that gives AI agents full browser control. You describe what you want in plain text, and it goes and does it.

It's not a Chrome extension. It uses Playwright under the hood, which means it can run headless or with a visible browser. It sees the page through a combination of DOM snapshots and screenshots, so it understands what's on screen the same way you do.

What makes it interesting: it handles logins, bypasses captchas, and keeps sessions alive. So you can automate things that require authentication, not just public pages.

A couple of use cases I see: scraping data that requires you to be logged in, automating repetitive tasks across web apps, filling forms, monitoring pages for changes, testing user flows.

It works with Claude, OpenAI, Gemini, DeepSeek, or even ClawdBot if you want to give your assistant full browser control on top of everything else it already does.

What caught my attention this week

Anthropic blocked everyone using Claude outside of Claude Code

If you use Claude through any third-party tool, this matters.

On January 9, without warning, Anthropic flipped a switch. Tools like OpenCode and Cursor stopped being able to use Pro and Max subscriptions.

The error message: "This credential is only authorized for use with Claude Code."

The backstory: developers had figured out how to spoof Claude Code's headers to get unlimited tokens on a $200/month plan. The same usage through the API would cost $1,000+.

Some were running autonomous coding loops overnight.

The wildest part: they discovered that people at xAI, Elon Musk's AI lab, were using Claude through Cursor to help build Grok.

Anthropic's own model being used to build a competitor.

DHH, the creator of Ruby on Rails, called it "very customer hostile."

This made some developers cancel their subscriptions on the spot.

OpenAI jumped on it immediately, publicly welcoming those developers to use their models in OpenCode and other open-source tools.

If you're using your Max plan with ClawdBot or any other third-party tool, be careful. This could affect you too.

The context: Anthropic is raising $10 billion at a $350 billion valuation. They're preparing for an IPO. Protecting their ecosystem makes business sense, even if developers didn't love the execution.

Davos: the 4 most powerful AI CEOs don't agree on anything

Last week was the World Economic Forum in Davos. Musk, Jensen Huang, Nadella, and Amodei were all there.

And they couldn't disagree more.

Musk said AI will be smarter than any individual human by the end of this year.

Then he posted on X: "We have entered the Singularity" and "2026 is the year of the Singularity."

Jensen Huang called AI "the largest infrastructure buildout in human history." But instead of doom, he talked about jobs.

He said plumbers, electricians, and construction workers building data centers are going to make six-figure salaries. "You don't need a PhD in computer science to make a great living."

Nadella had the most interesting framing. He called data centers "token factories" and proposed a new macroeconomic indicator: "Tokens per Dollar per Watt."

His warning: if AI only grows inside tech companies, it's a bubble.

The stat that hit me: only 10-12% of companies are seeing real benefits from AI. 56% report zero.

And then Amodei, the CEO of Anthropic, compared selling Nvidia's H200 chips to China to "selling nuclear weapons to North Korea and bragging that Boeing made the casings."

This is especially spicy because Nvidia just invested $10 billion in Anthropic.

You can watch the full conversations on the World Economic Forum website or on their Youtube channel. Each one was a separate session with BlackRock CEO Larry Fink.

Nobody agrees on what AGI is (and everyone's definition conveniently helps their story)

This keeps coming up and I think it's worth talking about, because everyone has a different answer and it's confusing.

Musk says we've entered the Singularity.

Altman said AGI "kinda went whooshing by" (I mentioned this a few weeks ago).

Sequoia just published a piece called "2026: This is AGI" where they show an AI agent that found the perfect recruiting candidate in 31 minutes. Searching LinkedIn, cross-referencing Twitter, filtering by engagement, and even drafting the outreach email.

And then Demis Hassabis, the CEO of Google DeepMind, said at Davos that current AI systems are "nowhere near" AGI.

Dario Amodei from Anthropic? He says maybe 12-24 months.

Here's what I think is actually happening: everyone defines AGI in a way that benefits their narrative.

The companies with products already in market say it's here. The ones still building say it's close but not yet. The researchers say it needs more breakthroughs.

The honest answer is probably that nobody really knows. But the tools we have right now are already changing how people work.

Whether you call that AGI or not doesn't really matter if it's saving you hours every day.

Humans& raised $480M in seed, the biggest seed round in history

This one is wild.

A three-month-old startup called Humans& just raised $480 million at a $4.48 billion valuation. Seed round. As in, first round of funding.

When you look at the team, it kind of makes more sense how they pulled it off.

Andi Peng, who worked on reinforcement learning for Claude 3.5 through 4.5 at Anthropic.

Yuchen He, who helped build Grok at xAI.

Georges Harik, Google's seventh employee, the guy who worked on Gmail, kicked off Google Docs, and led the acquisition of Android.

Noah Goodman, a Stanford professor who did stints at DeepMind.

Investors include: Nvidia, Jeff Bezos and GV(Google Ventures)

What they're building: AI that helps humans collaborate with each other, not AI that replaces humans.

Think an AI-powered messaging app that coordinates teams, synthesizes opinions, and asks questions like a colleague would. Their CEO described it as "connective tissue" between humans and machines.

The origin story: Andi Peng left Anthropic after watching demos of Claude working alone for hours. She posted on X: "That was never my motivation. I think of machines and humans as complementary."

Two things worth noting: they don't have a product yet. And roughly half of the founding team has already left.

Still, $480M seed at $4.48B valuation with no product is a statement about where the money thinks AI is going. Not autonomous agents, but agents that help people work together.

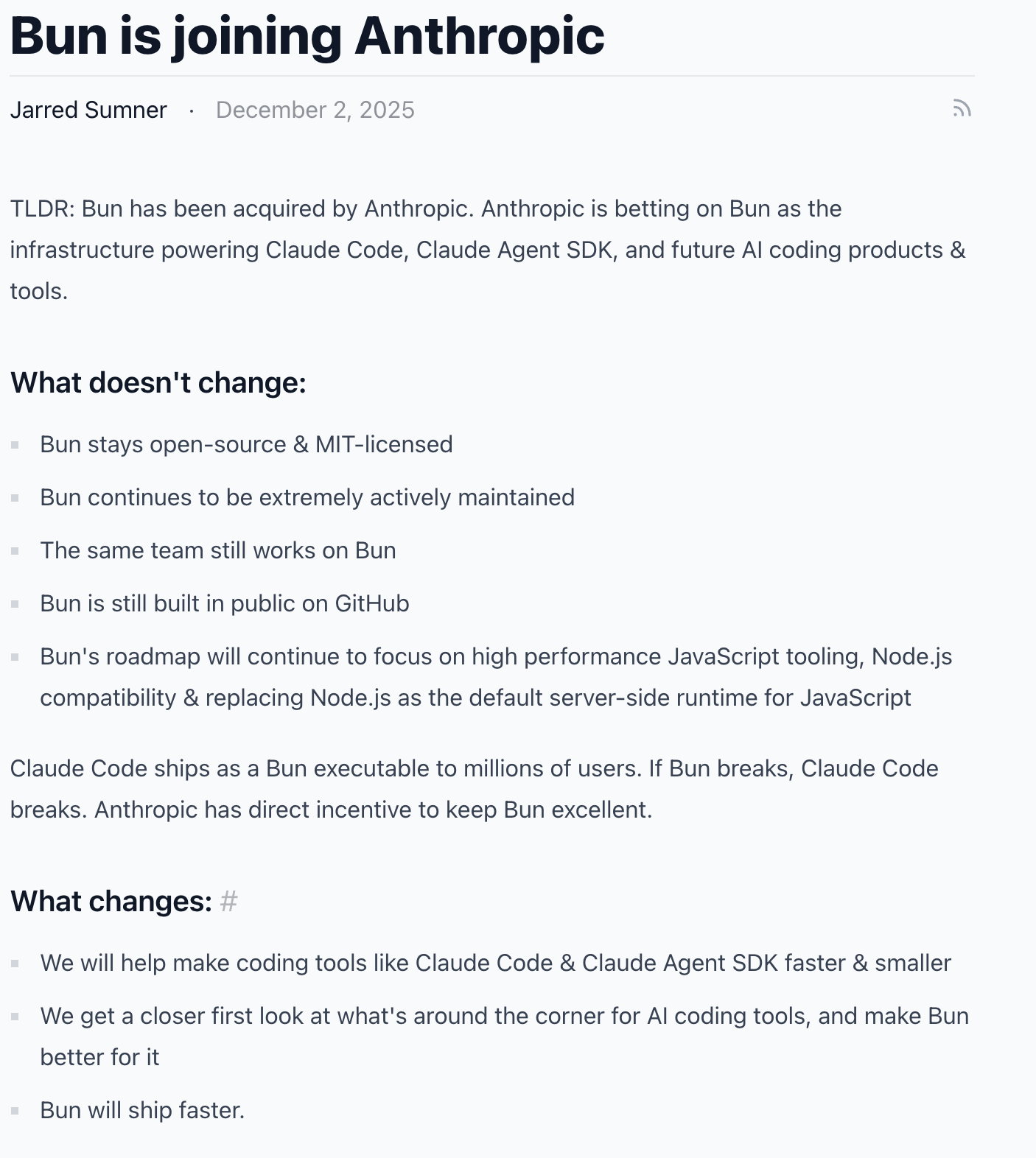

Anthropic bought Bun and Claude Code hit $1B in revenue

Claude Code reached $1 billion in run-rate revenue in just 6 months.

To put that in perspective, Anthropic's total run rate is now $9 billion, up from $4 billion in July. Their target for 2026 is $20-26 billion.

To keep that momentum going, they acquired Bun, the JavaScript runtime that's been getting a lot of attention for being ridiculously fast. This is Anthropic's first-ever acquisition.

The idea: make Claude Code's infrastructure faster so it can handle the growing demand.

Claude Code actually ships as a Bun executable, so if Bun breaks, Claude Code breaks. They have a direct incentive to keep it excellent.

Mike Krieger, Anthropic's CPO, said it directly: "Bringing the Bun team into Anthropic means we can build the infrastructure to compound that momentum."

Bun stays open source under the MIT license. Same team, same mission.

Meanwhile, Anthropic is raising $10 billion at a $350 billion valuation. For context, that would make them one of the most valuable private companies in the world.

A new model just dropped and it might be a game changer

I usually test things before I mention them here, but this one literally came out today and I didn't want to leave it out.

Moonshot AI, a Chinese AI lab, just released Kimi K2.5. Open source, 1 trillion parameters, and benchmarks on par with Opus 4.5, GPT 5.2, and Gemini 3 Pro.

The headline feature: Agent Swarm.

The model can spin up 100 sub-agents on its own, running 1,500 tool calls in parallel. No predefined workflows, no hand-crafted roles. It figures out how to split the work by itself. They say it cuts execution time by 4.5x compared to a single agent.

But the real story is the price. Kimi K2 was already cheaper on input and 30x cheaper on output than Claude Opus 4. If K2.5 is anywhere near that, you're looking at frontier-level performance at a fraction of the cost.

That's it for this week.

If any of this was useful, or there’s something you’d improve reply and let me know. I read everything.

-Ed

Did you enjoy this newsletter? Say thanks by checking out one of my businesses:

Liked this? Sign up here to get more.